Salesforce Commerce Cloud Data Purging: Keep Your Store Fast & Efficient

Salesforce Commerce Cloud Data Purging: Keep Your Store Fast & Efficient

Why Data Purging Matters in SFCC

As your Salesforce Commerce Cloud (SFCC) store grows, so does your database. Keeping unnecessary data can slow down performance, affect customer experience, and increase costs. Let’s talk about why and how you should purge outdated data to keep your store lightning-fast.

Unlock SFCC Pro Tips!

Imagine what you’re missing in our other guides! Stay ahead of the competition, get exclusive pro tips, and master Salesforce Commerce Cloud like never before.

👉 Subscribe NOW and never struggle with SFCC again!

Technical Considerations

Many SFCC merchants wonder:

How many orders can my SFCC instance handle?

How many customer records can I store before performance drops?

The truth is, there’s no magic number. It depends on your store's setup, integrations, and customizations. Poorly optimized logic can hurt performance no matter how much data you store.

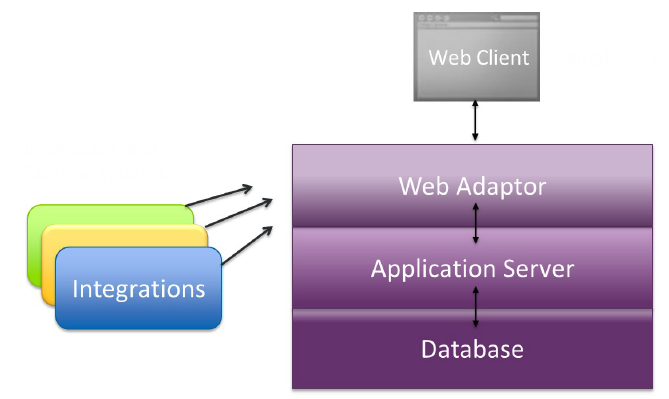

How SFCC Handles Data Load

Your storefront’s speed depends on several factors, including:

✅ Web adapters & servers

✅ Application & database processing times

✅ HTML complexity & rendering

🔹 The faster you process transactions at the web tier, the smoother your store runs. Reducing unnecessary transactions to the database is key to keeping your store efficient.

📷 Image:

The Need for Data Purging

📌 Keeping old data can slow down queries and impact storefront speed.

📌 Frequent updates? Maybe you don’t need to purge.

📌 Stale records? Time to clean house.

A great strategy is to analyze your business objects and track how they evolve over time. For example, older orders can be purged to maintain a lean database. Some merchants only keep orders from the last 90 days, leading to faster searches and exports.

🚨 But beware! If you rely on order history for personalized promotions, you may need a different approach.

How SFCC Handles Data Purging

Automated Cleanups with "PurgeObsoleteData"

SFCC includes a built-in system job to clean up old data:

Customer retention settings: Go to

Administration > Global Preferences > Retention SettingsOrder retention settings: Go to

Merchant Tools > Site Preferences > Order Data Settings

💡 Tip: If you store orders in an external system, there’s no reason to keep them in SFCC. Purging them will improve performance.

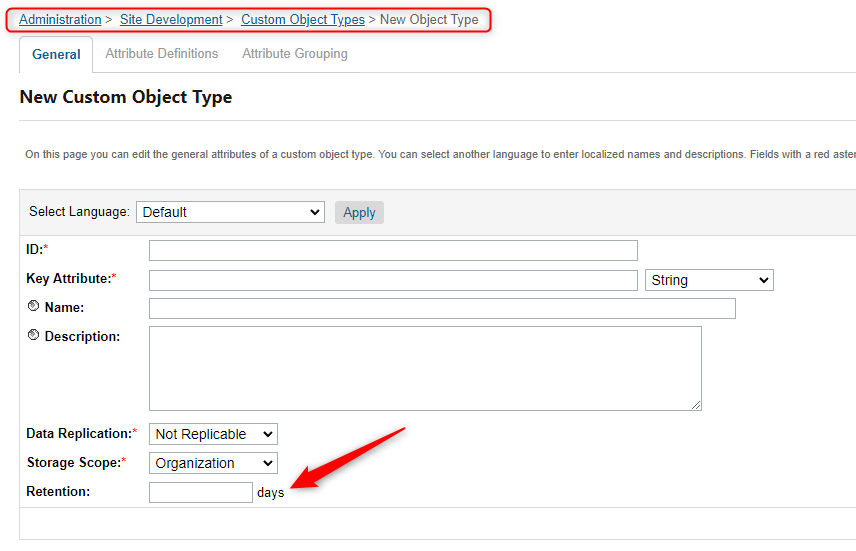

Custom Object Purging

Custom objects are essential in SFCC, but you need to manage them properly. Set retention settings for your custom objects under the “General” tab in Business Manager.

📷 Image:

Best Practices for Efficient Data Management

Custom Objects & Attributes

⚠️ Watch out for attribute bloat! Too many custom attributes can slow down queries.

✔ Keep only necessary attributes

✔ Remove temporary values (e.g., fraud check after order processing)

✔ Use JSON/XML storage for non-searchable data

Optimized Search APIs for Customers & Orders

Instead of querying directly from the database, use SFCC’s search APIs:

✅ Customer Search → Use

searchProfiles()andprocessProfiles()inCustomerMgr✅ Order Search → Use

searchOrders()instead ofqueryOrders()inOrderMgr

🔗 More info:

Use External Systems for Long-Term Storage

SFCC isn’t designed as a system of record. If you need long-term order storage, archive older records externally. This keeps SFCC lean and responsive.

Final Thoughts: Keep Your Store Fast & Scalable

A slow store = lost sales. Purging old, unnecessary data helps improve performance, scalability, and customer experience.